Topics covered in this post

- How to calculate correlations between asset classes using Pandas

- How to use NetworkX to analyse and manipulate network data structures

- How to make an interactive network visualisation using Plotly

In this tutorial, we will build the interactive visualisation embedded below to visualise correlations between different asset classes. This can be a very useful method for understanding and identifying which types of assets behave in a similar fashion.

The code and raw data for this tutorial are available in this Github repoistory

⬇️ Final visualisation we will create during this post ⬇️

Introduction

We are told that equities and bonds tend to move in opposite directions and certain assets such as gold and the Japanese Yen are ‘safe-haven’ assets and therefore behave in similar ways.

But what does that actually look like and are there any other interesting relationships between asset classes?

Networks can be a great way to convey this complex information at a high level and help understand broad market dynamics.

Information on the correlations between asset classes can be particularly useful for investors wanting to diversify their portfolio. Most people understand that portfolio risk can be mitigated by diversification, however, this may not always be the case. For instance, if you ‘diversified’ your portfolio by investing in two different stocks which are strongly correlated with each other (i.e. if stock A goes up 2% then stock B also goes up 2%) then the overall risk of the portfolio has not been reduced. If there is a negative factor affecting stock A then it is also likely to affect stock B and both prices will trend downwards - not an ideal situation.

Therefore, diversification is only realistically achieved by investing in assets that are uncorrelated with each other.

In this post, I will demonstrate how to use two popular Python libraries, Networkx and Plotly, to manipulate the data as a network and visualise relationships between asset classes in an interactive way.

Data Preparation

Library imports

We will require the following Python libraries:

- numpy and pandas to read and manipulate the raw data

- networkx to generate the network representation of the data and create some initial graph visualisations

- matplotlib and seaborn for general visualisations and plot formatting

- Plotly to create the final interactive visualisation

import matplotlib.pyplot as plt

import networkx as nx

import numpy as np

import pandas as pd

import plotly.graph_objs as go

import seaborn as sns

from IPython.core.display import display, HTML

from plotly.offline import init_notebook_mode

# plotly offline mode

init_notebook_mode(connected=True)

Load data

For this analysis we will use a dataset containing the daily adjusted closing prices of 39 major ETFs that represent different asset classes covering equities, bonds, currencies and commodities. The data covers a period of 4 years between November 2013 and November 2017.

The data is available in the

data/daily_asset_prices.csvfile in the GitHub repository

# read csv file

raw_asset_prices_df = pd.read_csv("data/daily_asset_prices.csv", index_col="Date")

# show first five rows

raw_asset_prices_df.head()

| Date | Bonds Global | Commodities | DOW | Emerg Markets | EAFE | Emerg Markets Bonds | Pacifix ex Japan | Germany | Italy | Japan | ... | Europe | Pacific | VXX | Materials | Energy | Finance | Tech | Utilities | ST Corp Bond | CHF |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 2017-11-08 | 81.83 | 16.40 | 235.46 | 46.78 | 69.87 | 114.60 | 47.69 | 33.18 | 30.95 | 60.02 | ... | 58.20 | 72.77 | 33.53 | 58.70 | 69.82 | 26.25 | 64.01 | 55.70 | 104.96 | 94.5100 |

| 2017-11-07 | 81.89 | 16.43 | 235.42 | 46.56 | 69.64 | 114.65 | 47.22 | 33.07 | 31.09 | 59.65 | ... | 58.17 | 72.20 | 33.52 | 58.64 | 70.16 | 26.38 | 63.66 | 55.66 | 105.01 | 94.5400 |

| 2017-11-06 | 81.86 | 16.53 | 235.41 | 46.86 | 69.90 | 115.26 | 47.20 | 33.34 | 31.22 | 59.18 | ... | 58.67 | 71.98 | 33.34 | 58.58 | 70.25 | 26.75 | 63.63 | 55.00 | 105.00 | 94.7500 |

| 2017-11-03 | 81.80 | 16.22 | 235.18 | 46.34 | 69.80 | 115.42 | 47.09 | 33.39 | 31.22 | 59.19 | ... | 58.58 | 71.88 | 33.66 | 58.83 | 68.68 | 26.78 | 63.49 | 55.21 | 105.00 | 94.4400 |

| 2017-11-02 | 81.73 | 16.12 | 234.96 | 46.58 | 69.91 | 116.15 | 47.31 | 33.50 | 31.43 | 59.05 | ... | 58.69 | 71.89 | 33.71 | 58.86 | 68.48 | 26.89 | 62.99 | 55.01 | 105.04 | 94.6299 |

5 rows × 39 columns

# get number of rows and columns of the dataset

df_shape = raw_asset_prices_df.shape

print(f"There are {df_shape[0]} rows and {df_shape[1]} columns in the dataset")

print(

(

f"Data timeperiod covers: {min(raw_asset_prices_df.index)} "

f"to {max(raw_asset_prices_df.index)}"

)

)

There are 1013 rows and 39 columns in the dataset

Data timeperiod covers: 2013-11-01 to 2017-11-08

We can see that the csv file of asset prices contains 39 different assets and 1013 records for each asset. The timeseries is in reverse order (newest to oldest) and there are no missing data points in the dataset.

Convert to log daily returns

Before calculating the correlation matrix, it is important to first normalise the dataset and convert the absolute asset prices into daily returns.

In financial timeseries it is common to make this transformation as investors are typically interested in returns on assets rather than their absolute prices. By normalising the data it allows us to compare the expected returns of two assets more easily.

# create empty dataframe for log returns information

log_returns_df = pd.DataFrame()

# calculate log returns of each asset

# loop through each column in dataframe and and calculate the daily log returns

# add log returns column to new a dataframe

for col in raw_asset_prices_df.columns:

# dates are given in reverse order so need to set diff to -1.

log_returns_df[col] = np.log(raw_asset_prices_df[col]).diff(-1)

# check output of log returns dataframe

log_returns_df.head()

| Date | Bonds Global | Commodities | DOW | Emerg Markets | EAFE | Emerg Markets Bonds | Pacifix ex Japan | Germany | Italy | Japan | ... | Europe | Pacific | VXX | Materials | Energy | Finance | Tech | Utilities | ST Corp Bond | CHF |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 2017-11-08 | -0.000733 | -0.001828 | 0.000170 | 0.004714 | 0.003297 | -0.000436 | 0.009904 | 0.003321 | -0.004513 | 0.006184 | ... | 0.000516 | 0.007864 | 0.000298 | 0.001023 | -0.004858 | -0.004940 | 0.005483 | 0.000718 | -0.000476 | -0.000317 |

| 2017-11-07 | 0.000366 | -0.006068 | 0.000042 | -0.006423 | -0.003727 | -0.005306 | 0.000424 | -0.008131 | -0.004173 | 0.007911 | ... | -0.008559 | 0.003052 | 0.005384 | 0.001024 | -0.001282 | -0.013928 | 0.000471 | 0.011929 | 0.000095 | -0.002219 |

| 2017-11-06 | 0.000733 | 0.018932 | 0.000977 | 0.011159 | 0.001432 | -0.001387 | 0.002333 | -0.001499 | 0.000000 | -0.000169 | ... | 0.001535 | 0.001390 | -0.009552 | -0.004259 | 0.022602 | -0.001121 | 0.002203 | -0.003811 | 0.000000 | 0.003277 |

| 2017-11-03 | 0.000856 | 0.006184 | 0.000936 | -0.005166 | -0.001575 | -0.006305 | -0.004661 | -0.003289 | -0.006704 | 0.002368 | ... | -0.001876 | -0.000139 | -0.001484 | -0.000510 | 0.002916 | -0.004099 | 0.007906 | 0.003629 | -0.000381 | -0.002009 |

| 2017-11-02 | 0.000979 | 0.006223 | 0.003283 | 0.001289 | 0.003008 | 0.002759 | 0.005723 | 0.004488 | 0.009591 | 0.001186 | ... | 0.002559 | 0.002089 | -0.011210 | -0.007279 | -0.002916 | 0.009341 | 0.000476 | 0.003642 | 0.000000 | 0.004553 |

5 rows × 39 columns

Calculate correlations matrix

To calculate the pairwise correlations between assets we can simply use the inbuilt pandas corr() function.

# calculate correlation matrix using inbuilt pandas function

correlation_matrix = log_returns_df.corr()

# show first five rows of the correlation matrix

correlation_matrix.head()

| Bonds Global | Commodities | DOW | Emerg Markets | EAFE | Emerg Markets Bonds | Pacifix ex Japan | Germany | Italy | Japan | ... | Europe | Pacific | VXX | Materials | Energy | Finance | Tech | Utilities | ST Corp Bond | CHF | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Bonds Global | 1.000000 | -0.086234 | -0.279161 | -0.069623 | -0.177521 | 0.296679 | -0.104739 | -0.188547 | -0.201014 | -0.159231 | ... | -0.181249 | -0.141707 | 0.222886 | -0.220603 | -0.198536 | -0.420264 | -0.197672 | 0.301774 | 0.598105 | 0.250142 |

| Commodities | -0.086234 | 1.000000 | 0.305137 | 0.428909 | 0.369637 | 0.313791 | 0.400316 | 0.283711 | 0.331083 | 0.250509 | ... | 0.367019 | 0.341089 | -0.224835 | 0.429541 | 0.677430 | 0.257454 | 0.225463 | 0.089290 | 0.028872 | 0.042324 |

| DOW | -0.279161 | 0.305137 | 1.000000 | 0.719260 | 0.793387 | 0.343817 | 0.688344 | 0.722882 | 0.666315 | 0.691672 | ... | 0.765251 | 0.769604 | -0.797538 | 0.817422 | 0.655544 | 0.874902 | 0.849271 | 0.395247 | -0.042967 | -0.172730 |

| Emerg Markets | -0.069623 | 0.428909 | 0.719260 | 1.000000 | 0.796425 | 0.580454 | 0.815049 | 0.697222 | 0.660268 | 0.638955 | ... | 0.763111 | 0.811155 | -0.668680 | 0.674630 | 0.607172 | 0.606749 | 0.686458 | 0.365031 | 0.123624 | -0.018461 |

| EAFE | -0.177521 | 0.369637 | 0.793387 | 0.796425 | 1.000000 | 0.466883 | 0.790699 | 0.884385 | 0.830052 | 0.785198 | ... | 0.949368 | 0.872972 | -0.721559 | 0.720030 | 0.610561 | 0.711714 | 0.725415 | 0.352270 | 0.050121 | 0.021760 |

5 rows × 39 columns

The conventional way to visualise correlations is via a heatmap. Before developing the network visualisation, we will quickly create a heatmap of the correlation matrix to check the output of the correlation calculations and to gain a high-level insight into some of the relationships present in the data.

Seaborn has a very useful function called clustermap which visualises the matrix as a heatmap but also clusters the ETFs so that ETFs which behave similarly are next to each other.

Clustered heatmaps can be a useful way of visualising correlations between attributes in a dataset, especially if the data is highly dimensional as it automatically reorders attributes that are similar to each other into clusters. This makes the heatmap more structured and readable so it is easier to identify relationships between ETFs and which asset classes behave similarly.

# visualise correlation matrix using a clustered heatmap

sns.clustermap(correlation_matrix, cmap="RdYlGn")

plt.show()

The heatmap is colour coded using a divergent colour scale where strong positive correlations (correlation = 1) are dark green, uncorrelated assets are yellow (correlation = 0) and negatively correlated assets are red (correlation = -1).

The heatmap visualisation gives a good picture of the data and already tells an interesting story:

- Broadly speaking, there are two major clusters of assets.

- These appear to be separated into equities and “non-equity” assets (e.g. bonds, currencies and precious metals).

- The heatmap shows that these two categories are generally negatively or non-correlated with each other.

- This is expected as ‘safe-haven’ assets such as bonds, gold and currencies like the Japanese Yen tend to move in opposite directions to equities which are seen as a riskier asset class.

- ETFs tracking Geographic regions which are close to each other are highly correlated with each other.

- For example, UK, Europe, Germany, Italy and France ETFs are highly correlated with each other, so are Japan, Pacific ex Japan and China ETFs

- The VXX ETF is strongly negatively correlated with equities.

Network Visualisations with NetworkX

Heatmaps are useful, however, they can only convey one dimension of information (the magnitude of the correlation between two assets). As an investor wanting to make a decision on which asset classes to invest in, a heatmap still does not help answer important questions such as what the annualized returns and volatility of an asset class is.

We can use network graphs to investigate the initial findings from the heatmap further and visualise them in a more accessible way that encodes more information.

Networkx is one of the most popular and useful Python libraries for analysing small/medium size networks.

Create Network Data Structure

To analyse the correlations as a network, we need to convert the correlations matrix into an edge list.

In the network, each asset class will be represented by a ‘node’ and the connections between nodes (‘edges’), will be given a numerical value corresponding to the correlation between the node pairs.

# convert matrix to list of edges and rename the columns

edges = correlation_matrix.stack().reset_index()

edges.columns = ["asset_1", "asset_2", "correlation"]

# remove self correlations

edges = edges.loc[edges["asset_1"] != edges["asset_2"]].copy()

# show the first 5 rows of the edge list dataframe.

edges.head()

| asset_1 | asset_2 | correlation | |

|---|---|---|---|

| 1 | Bonds Global | Commodities | -0.086234 |

| 2 | Bonds Global | DOW | -0.279161 |

| 3 | Bonds Global | Emerg Markets | -0.069623 |

| 4 | Bonds Global | EAFE | -0.177521 |

| 5 | Bonds Global | Emerg Markets Bonds | 0.296679 |

Now that we have an edge list, we need to feed that into the networkx library to create the graph which is an alternative name for a network. This can be done using the convenient inbuilt from_pandas_edgelist function.

Note that this network is undirected as the correlation between assets is the same in both directions.

# create undirected graph with weights corresponding to the correlation magnitude

G0 = nx.from_pandas_edgelist(edges, "asset_1", "asset_2", edge_attr=["correlation"])

# print out the graph info

# check number of nodes and degrees are as expected

# (all should have degree = 38, i.e. average degree = 38)

print(nx.info(G0))

Name:

Type: Graph

Number of nodes: 39

Number of edges: 741

Average degree: 38.0000

The output of the nx.info function verifies that we have created a graph with 39 nodes as expected (we have 39 different asset classes) and the ‘average degree’ - which is the average number of connections each node has - is 38. This means that every node is currently connected to every other node in the network (except for itself).

Visualise the network

NetworkX provides a plotting function to visualise the graph using matplotlib. There are a number of different ‘layouts’ which we can try out-of-the-box, including:

circular_layout- Position nodes on a circlerandom_layout- Position nodes uniformly at random in the unit squarespectral_layout- Position nodes using the eigenvectors of the graph Laplacianspring_layout- Position nodes using Fruchterman-Reingold force-directed algorithm

# create subplots

fig, axs = plt.subplots(nrows=2, ncols=2, figsize=(20, 20))

# save different layout functions in a list

layouts = [nx.circular_layout, nx.random_layout, nx.spring_layout, nx.spectral_layout]

# plot each different layout

for layout, ax in zip(layouts, axs.ravel()):

nx.draw(

G0,

with_labels=True,

node_size=700,

node_color="#e1575c",

edge_color="#363847",

pos=layout(G0),

ax=ax,

)

ax.set_title(layout.__name__, fontsize=20, fontweight="bold")

plt.show()

Whilst these visualisations may look cool, they are not very useful for conveying the information about the relationships between each asset. Let’s make some improvements!

Improved network visualisation

The network visualisation can be improved in several ways by thinking about the sort of information that we are looking to uncover from this analysis.

For this analysis, we will assume that the audience for this visualisation will be investors wanting to assess risk in their portfolio.

Investors would want to identify which assets are correlated and uncorrelated with each other to assess the unsystematic risk in their portfolio. Therefore, from this visualisation the user would want to quickly understand:

- Which assets show the strongest/most meaningful correlations (i.e. >0.5) with each other?

- Are these correlations positive or negative?

- Which are the most/least ‘connected’ assets? (i.e. which assets share the most/least strong correlations with other assets in the dataset)

- Which groups of assets behave similarly? (i.e. which assets are correlated with the same type of other assets)

With this information, an investor could identify if they held a number of assets that behave the same (increased risk) and identify assets that show very few correlations with assets currently held in the portfolio and investigate these as a potential opportunity for diversification.

Let’s make the following changes to improve readability and aid useability:

- reduce the number of connections between nodes by filtering out connections below a certain threshold value for the strength of correlation

- introduce colour to signify positive or negative correlations

- scale the edge thickness to indicate the magnitude of the correlation

- scale the size of nodes to indicate which assets have the greatest number of strong correlations with the rest of the assets in the dataset

Remove edges below a threshold

# 'winner takes all' method - set minimum correlation threshold to remove some

# edges from the diagram

threshold = 0.5

# create a new graph from edge list

Gx = nx.from_pandas_edgelist(edges, "asset_1", "asset_2", edge_attr=["correlation"])

# list to store edges to remove

remove = []

# loop through edges in Gx and find correlations which are below the threshold

for asset_1, asset_2 in Gx.edges():

corr = Gx[asset_1][asset_2]["correlation"]

# add to remove node list if abs(corr) < threshold

if abs(corr) < threshold:

remove.append((asset_1, asset_2))

# remove edges contained in the remove list

Gx.remove_edges_from(remove)

print(str(len(remove)) + " edges removed")

530 edges removed

Create colour, edge thickness and node size features

def assign_colour(correlation):

if correlation <= 0:

return "#ffa09b" # red

else:

return "#9eccb7" # green

def assign_thickness(correlation, benchmark_thickness=2, scaling_factor=3):

return benchmark_thickness * abs(correlation) ** scaling_factor

def assign_node_size(degree, scaling_factor=50):

return degree * scaling_factor

# assign colours to edges depending on positive or negative correlation

# assign edge thickness depending on magnitude of correlation

edge_colours = []

edge_width = []

for key, value in nx.get_edge_attributes(Gx, "correlation").items():

edge_colours.append(assign_colour(value))

edge_width.append(assign_thickness(value))

# assign node size depending on number of connections (degree)

node_size = []

for key, value in dict(Gx.degree).items():

node_size.append(assign_node_size(value))

Plot improved visualisation

# draw improved graph

sns.set(rc={"figure.figsize": (9, 9)})

font_dict = {"fontsize": 18}

nx.draw(

Gx,

pos=nx.circular_layout(Gx),

with_labels=True,

node_size=node_size,

node_color="#e1575c",

edge_color=edge_colours,

width=edge_width,

)

plt.title("Asset price correlations", fontdict=font_dict)

plt.show()

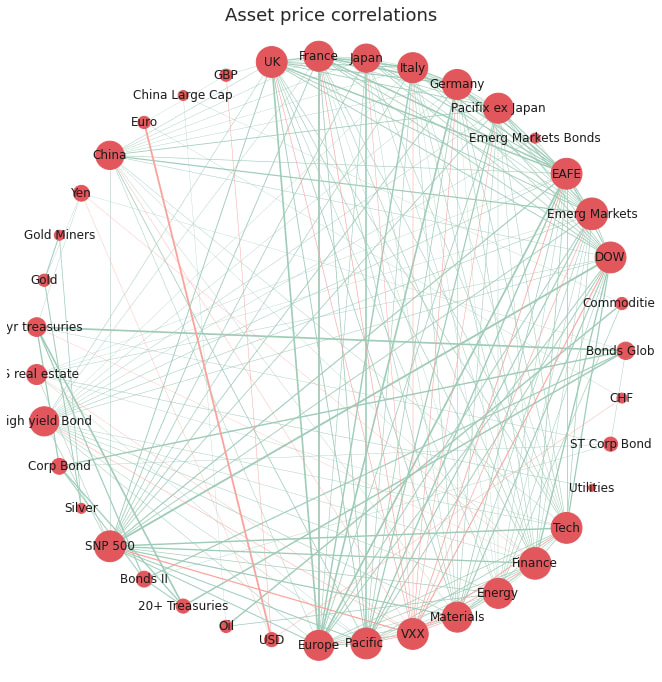

The network visualisation has been improved in four main ways:

- less cluttered: we have removed edges with weak correlations and kept only the edges with significant (actionable) correlations

- identify the type of correlation: simple intuitive colour scheme to show positive (green) or negative (red) correlations

- identify the strength of correlation: we now can assess the relative strength of correlations between nodes, with prominence given to the correlations with the greatest magnitude

- identify the most connected nodes: the size of the nodes has been adjusted to emphasise which nodes have the greatest number of strong correlations with other nodes in the network

Looking at this network we could now quickly identify which assets are highly correlated and therefore may pose an increased risk on the portfolio. The viewer could also identify and investigate further the assets with a low degree of connectivity (smaller node size) as these are weakly correlated with the other assets in the sample and may provide an opportunity to diversify the portfolio.

The circular layout, however, does not group the nodes in a meaningful order, it just orders the nodes in the order in which they were created, therefore it is difficult to gain insight as to which assets are most similar to each other in terms of correlations to other nodes.

We can improve this with a spring-based layout using the ‘Fruchterman-Reingold’ algorithm [2] which sets the positions of the nodes using a cost function which minimises the distances between strongly correlated nodes. This algorithm will therefore cluster the nodes which are strongly correlated with each other allowing the viewer to quickly identify groups of assets with similar properties.

# draw improved graph

nx.draw(

Gx,

pos=nx.fruchterman_reingold_layout(Gx),

with_labels=True,

node_size=node_size,

node_color="#e1575c",

edge_color=edge_colours,

width=edge_width,

)

plt.title("Asset price correlations - Fruchterman-Reingold layout", fontdict=font_dict)

plt.show()

The Fruchterman Reingold layout has successfully grouped the assets into clusters of strongly correlated assets. As seen before in the heatmap visualisation, there are distinct clusters of assets which behave similarly to each other. There is a cluster containing bond ETFs, a cluster of precious metal ETFs (silver, gold, goldminers), a cluster of currencies (CHF, USD, Euro) and a large cluster for equities.

However, the main cluster of equities ETFs is still very cluttered as the nodes are very tightly packed and the node sizes and labels are overlapping, making it difficult to make out.

As the layout now positions the nodes which are strongly correlated in space, it is no longer necessary to keep every single edge as it is implied that assets closer to each other in space are more strongly correlated. We can also convert the node sizes back to a consistent (smaller) size as the degree of each node is now meaningless.

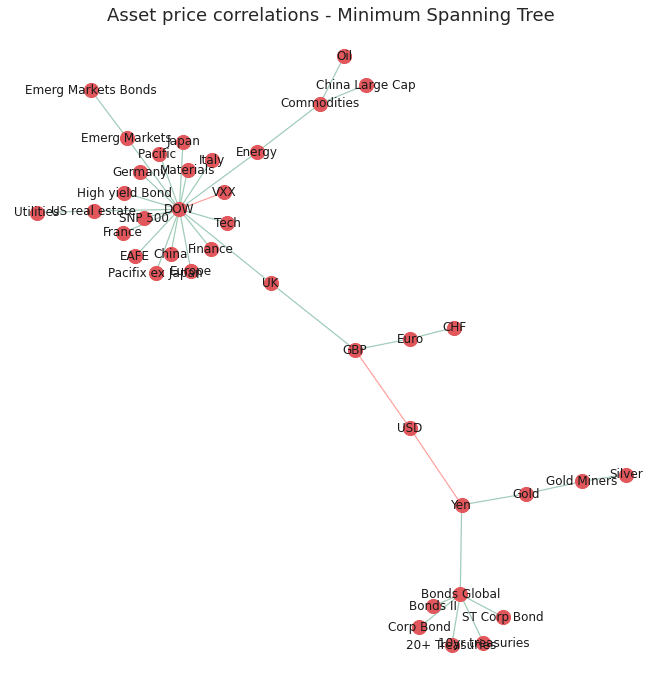

Minimum spanning tree

It is common in financial networks to use a minimum spanning tree [3,4,5] to visualise networks.

A minimum spanning tree reduces the edges down to a subset of edges which connects all the nodes together, without any cycles and with the minimum possible sum of edge weights (correlation value in this case). This essentially provides a skeleton of the graph, minimising the number of edges and reducing the clutter in the network graph.

Kruskal’s algorithm is used to calculate the minimum spanning tree and is fairly intuitive. However, Networkx has an inbuilt function which calculates the minimum spanning tree for us.

# create minimum spanning tree layout from Gx

# (after small correlations have been removed)

mst = nx.minimum_spanning_tree(Gx)

edge_colours = []

# assign edge colours

for key, value in nx.get_edge_attributes(mst, "correlation").items():

edge_colours.append(assign_colour(value))

# draw minimum spanning tree. Set node size and width to constant

nx.draw(

mst,

with_labels=True,

pos=nx.fruchterman_reingold_layout(mst),

node_size=200,

node_color="#e1575c",

edge_color=edge_colours,

width=1.2,

)

# set title

plt.title("Asset price correlations - Minimum Spanning Tree", fontdict=font_dict)

plt.show()

The improved graph has made the clusters of nodes more readable by reducing the node size and reducing the number of edges in the graph. However, reducing the clutter was at the expense of conveying some information about the nodes such as nodes with the most strong correlations and their relative strengths.

Now we have a useable network and layout we can make it interactive using Plotly!

Interactive visualisation with Plotly

Interactive visualisations can greatly enhance the user experience and help encode more information through the use of informative tooltips.

Currently the network is very ‘static’ and does not convey any information other than spacial relationships between strongly correlated assets. Although useful, this logically leads the viewer to ask more questions about the properties of each individual node in a cluster such as their historical returns and the nodes with which it is least correlated.

One way to include such information about each node is to use an interactive graph with informative tooltips. This can be achieved using the Plotly[6] python library that offers a Python API to create interactive javascript graphs built in d3.js.

The main benefit of using Plotly in this example is the use of tooltips which can be used to store lots of additional information about each ETF. There are obviously many different bits of information of use that we could incorporate into the tooltip. For this example I will add the following attributes:

- annualised returns of the asset class

- annualised volatility of the asset class

- the top and bottom three assets which the asset of interest is most/least strongly correlated with

Creating Tooltips

The following functions calculate the above quantities. It should be noted that Plotly tooltips are formatted using html. Therefore the input to the tooltip should be a string with the relevant html tags for any formatting that is required.

def convert_rankings_to_string(ranking):

"""

Concatenate list of nodes and correlations into a single html

string (required format for the plotly tooltip)

Inserts html "<br>" inbetween each item in order to add a new

line in the tooltip

"""

s = ""

for r in ranking:

s += r + "<br>"

return s

def calculate_stats(returns=log_returns_df):

"""calculate annualised returns and volatility for all ETFs

Returns:

tuple: Outputs the annualised volatility and returns as a list of

floats (for use in assigning node colours and sizes) and also

as a lists of formatted strings to be used in the tool tips.

"""

# log returns are additive, 252 trading days

annualized_returns = list(np.mean(returns) * 252 * 100)

annualized_volatility = [

np.std(returns[col] * 100) * (252 ** 0.5) for col in list(returns.columns)

]

# create string for tooltip

annualized_volatility_2dp = [

"Annualized Volatility: " "%.1f" % r + "%" for r in annualized_volatility

]

annualized_returns_2dp = [

"Annualized Returns: " "%.1f" % r + "%" for r in annualized_returns

]

return (

annualized_volatility,

annualized_returns,

annualized_volatility_2dp,

annualized_returns_2dp,

)

def get_top_and_bottom_three(df=correlation_matrix):

"""

get a list of the top 3 and bottom 3 most/least correlated assests

for each node.

Args:

df (pd.DataFrame): pandas correlation matrix

Returns:

top_3_list (list): list of lists containing the top 3 correlations

(name and value)

bottom_3_list (list): list of lists containing the bottom three

correlations (name and value)

"""

top_3_list = []

bottom_3_list = []

for col in df.columns:

# exclude self correlation #reverse order of the list returned

top_3 = list(np.argsort(abs(df[col]))[-4:-1][::-1])

# bottom 3 list is returned in correct order

bottom_3 = list(np.argsort(abs(df[col]))[:3])

# get column index

col_index = df.columns.get_loc(col)

# find values based on index locations

top_3_values = [df.index[x] + ": %.2f" % df.iloc[x, col_index] for x in top_3]

bottom_3_values = [

df.index[x] + ": %.2f" % df.iloc[x, col_index] for x in bottom_3

]

top_3_list.append(convert_rankings_to_string(top_3_values))

bottom_3_list.append(convert_rankings_to_string(bottom_3_values))

return top_3_list, bottom_3_list

Calculating Graph Coordinates

Plotly does not have an ‘out-of-the-box’ network graph chart, therefore, we need to ‘imitate’ the network layout by plotting the data as a scatter plot which plots the graph nodes, and plot a ‘line’ chart on top which draws the lines which connect each point.

To achieve this we need a function that converts the Fruchterman Reingold coordinates calculated using networtx into an x and y series to create the scatterplot. We also need to store the x and y coordinates of the start and end of each edge which will be used to draw the ‘line’ chart for the edges.

The function below calculates the x and y coordinates for the scatter plot (Xnodes and Ynodes) and the coordinates of the starting and ending positions of the lines connecting nodes (Xedges, Yedges):

def get_coordinates(G=mst):

"""Returns the positions of nodes and edges in a format

for Plotly to draw the network

"""

# get list of node positions

pos = nx.fruchterman_reingold_layout(mst)

Xnodes = [pos[n][0] for n in mst.nodes()]

Ynodes = [pos[n][1] for n in mst.nodes()]

Xedges = []

Yedges = []

for e in mst.edges():

# x coordinates of the nodes defining the edge e

Xedges.extend([pos[e[0]][0], pos[e[1]][0], None])

Yedges.extend([pos[e[0]][1], pos[e[1]][1], None])

return Xnodes, Ynodes, Xedges, Yedges

Now we can use these functions to calculate all the quantities for the tooltips and calculate the coordinates.

# get statistics for tooltip

# make list of node labels.

node_label = list(mst.nodes())

# calculate annualised returns, annualised volatility and round to 2dp

annual_vol, annual_ret, annual_vol_2dp, annual_ret_2dp = calculate_stats()

# get top and bottom 3 correlations for each node

top_3_corrs, bottom_3_corrs = get_top_and_bottom_three()

# create tooltip string by concatenating statistics

description = [

f"<b>{node}</b>"

+ "<br>"

+ annual_ret_2dp[index]

+ "<br>"

+ annual_vol_2dp[index]

+ "<br><br>Strongest correlations with: "

+ "<br>"

+ top_3_corrs[index]

+ "<br>Weakest correlations with: "

"<br>" + bottom_3_corrs[index]

for index, node in enumerate(node_label)

]

# get coordinates for nodes and edges

Xnodes, Ynodes, Xedges, Yedges = get_coordinates()

# assign node colour depending on positive or negative annualised returns

node_colour = [assign_colour(i) for i in annual_ret]

# assign node size based on annualised returns size (scaled by a factor)

node_size = [abs(x) ** 0.5 * 5 for x in annual_ret]

Plot the Interactive Visualisation

To plot the network we define the scatter plot (tracer) and line plot (tracer_marker) series and define some cosmetic parameters which dictate the graph layout.

# Plot graph

# edges

tracer = go.Scatter(

x=Xedges,

y=Yedges,

mode="lines",

line=dict(color="#DCDCDC", width=1),

hoverinfo="none",

showlegend=False,

)

# nodes

tracer_marker = go.Scatter(

x=Xnodes,

y=Ynodes,

mode="markers+text",

textposition="top center",

marker=dict(size=node_size, line=dict(width=1), color=node_colour),

hoverinfo="text",

hovertext=description,

text=node_label,

textfont=dict(size=7),

showlegend=False,

)

axis_style = dict(

title="",

titlefont=dict(size=20),

showgrid=False,

zeroline=False,

showline=False,

ticks="",

showticklabels=False,

)

layout = dict(

title="Plotly - interactive minimum spanning tree",

width=800,

height=800,

autosize=False,

showlegend=False,

xaxis=axis_style,

yaxis=axis_style,

hovermode="closest",

plot_bgcolor="#fff",

)

fig = go.Figure()

fig.add_trace(tracer)

fig.add_trace(tracer_marker)

fig.update_layout(layout)

fig.show()

display(

HTML(

"""

<p>Node sizes are proportional to the size of

annualised returns.<br>Node colours signify positive

or negative returns since beginning of the timeframe.</p>

"""

)

)

Graph Info

Node sizes are proportional to the size of annualised returns

Node colours signify positive or negative returns since beginning of the timeframe

# Save interactive vis to json

fig.write_json("assets/plotly_asset_prices_network.json")

With this final network layout, we have summarised the most important information about the relationships between assets in the dataset. Users can quickly identify clusters of assets which are strongly correlated with each other, gauge the performance of the asset over the timeframe by looking at the node sizes and colours and can get more detailed information about each node by hovering over it.

Conclusion

In this post we have shown how to visualise a network of asset price correlations using the networkx python library. The ‘out of the box’ networkx visualisations were iteratively improved to help the audience identify assets which behave similarly to other assets in the dataset. This was achieved by reducing redundant information in the network and using intuitive colour schemes, edge thickness and node thickness to convey information in a qualitative way. The functionality of the visualisation was further improved by the use of Plotly, an interactive graphing library, which allowed us to use tooltips to store more information about each node in the network without cluttering the visualisation.

Future Work

Further work could look at the rolling asset correlations over a shorter timeframe and see how these may have changed over time and how this then affects the network layout and clusters. This could help identify outliers or asset classes which are not behaving as ‘normal’. There are also many more interesting calculations which can be carried out on the network, such as working out which are the most important or influential nodes in the networks by using centrality measures [7].

The post focused on how to visualise the network using networkx and Plotly, however, there are many other libraries and software which could have been used and are worth investigating:

Open Source Python Libraries

- Graphviz

- Pygraphviz

Open Source Interactive Libraries

- d3.js

Network drawing Software

- Gelphi (https://gephi.org/)

If you are interested in investigating network analytics in the financial industry in more detail, I would highly recommend checking out https://www.fnalab.com/ which is a commercial platform for financial network analytics. They have a free trial to their platform which has some very impressive network visualisations of the stock market but also financial transcations for fraud detection and many more applications.

References

[1] Networkx documentation: https://networkx.github.io/documentation/stable/reference/drawing.html

[2] Fruchterman, T.M. and Reingold, E.M., 1991. Graph drawing by force-directed placement. 1991. Zitiert auf den, p.37.

[3] Mantegna, R.N., 1999. Hierarchical structure in financial markets. The European Physical Journal B-Condensed Matter and Complex Systems, 11(1), pp.193-197.

[4] Rešovský, M., Horváth, D., Gazda, V. and Siničáková, M., 2013. Minimum Spanning Tree Application in the Currency Market. Biatec, 21(7), pp.21-23.

[5] Financial Network Analytics: https://www.fna.fi/

[6] Plotly: https://plot.ly/d3-js-for-python-and-pandas-charts/

[7] Wenyue Sun, Chuan Tian, Guang Yang, 2015, Network Analysis of the Stock Market